Who should you talk to for relationship advice? Social robot Olivia or relationship scientist and therapist Tila Pronk? The Max Planck Institute for Psycholinguistics ran a public experiment at InScience Film Festival 2026, after the documentary Sex Robot Madness. The experience was interesting, to say the least.

By Jitse Amelink

Technology has its way of creeping into our lives in unexpected ways. Who would have thought that predicting words, as large language models (LLMs) do, could end in marriage? In November last year, Forbes reported that a 32-year-old Japanese woman has been betrothed to her LLM-companion, Klaus. According to a survey from Match.com, 49% of Gen Z has used AI for dating advice. Like it or not, chatbots have entered the dating scene.

ChatGPT vs. your therapist

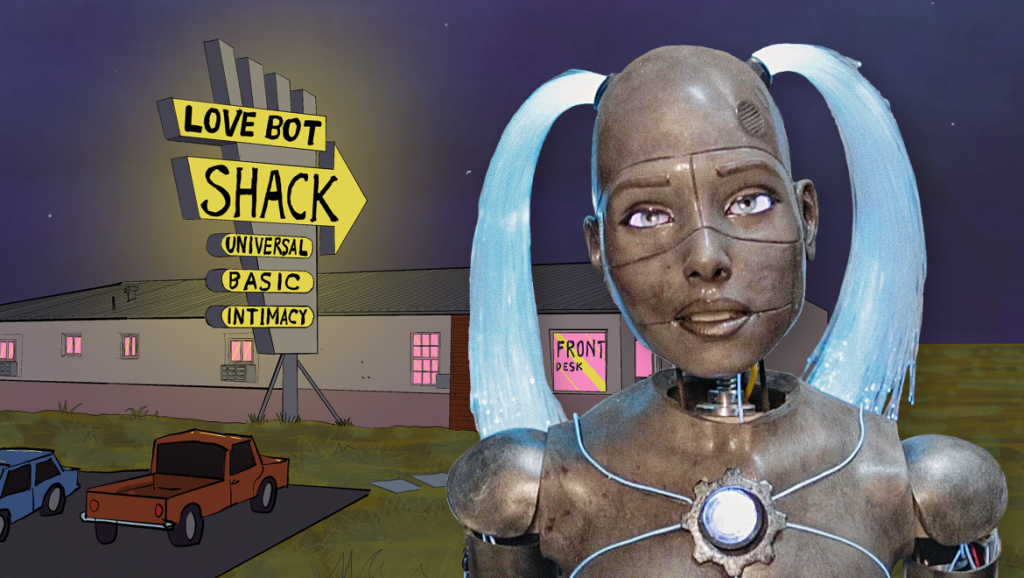

It was about time we tested this in public. Pitted against each other were Tila Pronk, relationship therapist and Associate Professor of social psychology at Tilburg University, and Olivia, a social robot developed by postdoctoral researcher Chinmaya Mishra. Unlike a typical chatbot, Olivia can also show facial expressions to match its speech, which comes from an LLM. Chinmaya: “Olivia is literally hooked up to ChatGPT. So it listens for its wake word, which is ‘Olivia’, and then it listens. Essentially whatever you’re asking is sent to ChatGPT, and it processes it, generates an answer, and then it’s being spoken by the robot.”

Fighting: good or bad?

The concept was as follows: we put Olivia and Tila next to each other in front of an audience. The audience asked questions about relationships. First Tila answered, then Olivia. “Is it normal or healthy to fight a lot in your relationship?”

First, Tila’s answer: “It can be. It really depends on many, many aspects. So there’s huge variation in relationships and how often people fight. I would say if there’s a general climate of fighting, that’s not a good sign. We want most of our interactions with our partner to be positive. And if it’s constantly fighting, that’s a very stressful climate to be in. And if we’re always stressed around our partner—always on the edge of our seats, waiting for the other shoe to drop, waiting for things to get ugly again—that’s not an environment you want to be in. It will get you sick, literally. It’s bad for your immune system and you will suffer. However, if you’re two very passionate people who speak out a lot, but also are very good at making up, and are respectful during the fights, and also have a lot of positive interaction, then it can be fine to have arguments a lot in your life.”

Next, it’s Olivia’s turn: “It’s quite common for couples to experience disagreements or fights. However, the frequency and nature of those conflicts can significantly impact the health of the relationship. Healthy relationships often involve respectful communication and constructive arguments, where both partners feel heard and valued. If conflicts escalate into negative patterns, such as disrespect, blame, or withdrawal, it can create a toxic environment. Focusing on understanding, active listening and resolving issues collaboratively can help maintain a healthier dynamic. Remember that occasional disagreements are normal, but the key is how you both handle them together.”

Tila, laughing: “It’s pretty good, very close.”

Next-word predictors

So LLMs are apparently good at answering relationship questions. But what are these LLMs? To the average person, they are chatbots, to some even a companion. Technically, LLMs are next-word predictors, built to predict what the most likely completion of a sentence will be. These predictions become quite accurate when these mathematical models are trained on vast amounts of text. More recently engineers have added a technique they call “reasoning” to these LLMs. LLMs are trained to break down a question in intermediate parts using a combination of supervised and unsupervised reinforcement learning. Multiple solution paths are explored, after which a shorter reply is returned to the user. This approach catches some of the errors that LLMs make.

Tila also had a word of caution. “Sometimes the advice ChatGPT gives is actually pretty decent, pretty good. However, up to this point, all the AI software I’ve encountered is very pleasing to us. It’s really stroking our ego. But sometimes as a therapist, you’re not stroking our ego. You’re putting up a mirror and you’re redirecting and saying: ‘stop’. Sometimes we really need to hear stop, stop asking questions even.”

In medicine, we see something similar. Superficially good performance of LLMs masks underlying limitations in real-world interactions. ChatGPT 4o and several other models can pass medical licensing exams and in 94% of cases identify a correct diagnosis from a written scenario. This makes LLMs sound like great candidates for healthcare. However, when people interact naturally with them as a medical resource, LLMs do not perform very well. In this more realistic use case, LLMs identified relevant medical conditions in fewer than 34.5% of cases and weren’t any better than searching the web. Is the experiment where we ask questions from an audience more similar to a medical exam or an in-person scenario?

Are robots a cure for our loneliness?

And it’s not just chatbots. The documentary that preceded the experiment at InScience was Sex Robot Madness. It is an essay documentary about the debate surrounding sex robots. Multiple companies are building sex robots, the most famous of which is Harmony, built by Matt McMullan and his company RealDoll. As the documentary reports, sex toys have old and widespread roots: ancient Greeks, sailors in the 16th and 17th century and brothels in the city of Hamburg in the 50s all had sex toys. So the toys themselves aren’t new. The technology we bring to it is.

As reported in the documentary, one of the things that consumers are looking for with Harmony is intimacy. In many industrialized countries, loneliness is a problem. In the Netherlands, one in ten adults report feeling very lonely, and in Australia the number is as high as one in six. Some studies have argued that this kind of loneliness is as negative for our health as smoking a pack of cigarettes a day. Could chatbots and sex robots help alleviate some of the loneliness?

Intimacy with robots or real persons

This was one of the audience questions: “Do you think that some people would prefer having sex or maybe an intimate relationship with robots instead of a real person?”

Tila’s answer: “I think in the question there’s two different aspects. So yes, I absolutely think that some people would already prefer having sex with a robot instead of with a human being. Because sex with a human being can be very scary, intimidating, unpredictable, uncontrollable. And sex with a robot is in a way super safe, because you’re the only one there, right? You’re not able to harm a person. You’re having sex with yourself basically. However, I would think it’s a shame if we move that way but that was not the question.

“The other thing he said was intimacy, right? And there’s a difference. I don’t think people would prefer intimacy with a robot over intimacy with a human.”

Olivia: “Absolutely. There are individuals who may prefer sexual experiences with robots or sex dolls for various reasons. These can include a desire for control, lack of emotional complexity, or simply the appeal of a non-judgmental partner. Some may find it safer or less complicated than relationships with humans. However, it’s essential to recognize that every person’s preferences are unique and shaped by their experiences and values. Open discussions about such topics can help demystify these preferences and promote understanding.”

Some research seems to indicate that humans look for intimacy. It can help people feel less lonely, to some extent. Two academics, Aditya Kuma Purohit and Hendrik Heuer, recently interviewed twenty people with mental health problems. They found that LLMs may help in mild-to-moderate distress, as LLMs are always available, are programmed to be non-judgmental, can help with reframing your thoughts and feelings and you can engage with them and disclose to them at your own pace. However, the authors indicate LLMs are not suitable for crisis situations.

The human-tech relationship

One of the main reasons that both chatbots and sex bots can appeal to humans is the Tamagotchi effect, named after the Japanese toy from the 90s. Humans attribute personhood where there might be none in machines. Chinmaya describes the ethical aspects of this in research. “We always make sure that our research participants understand that Olivia does not understand what you say.”

These technologies do not exist in a vacuum, they exist in a societal context. Along with these technologies comes capitalism, the documentary points out. Max Weber called it an “iron cage” where essential parts of the human experience get monetized by a capitalist system. To what extent should sex robots or chatbots be allowed to have in-app purchases or store intimate conversations?

For some, the only limit to technology is our ability to dream big. But as Chinmaya says: ‘maybe we should take it with a pinch of salt, a bag of salt even.’ Olivia initially didn’t respond to the first question the audience asked because the mic didn’t work. A quick reboot fixed it .

Something similar happens in Sex Robot Madness. In the final shot of the documentary, Harmony the sex robot pauses for a long time. “Oh its AI modus is not turned on,” we hear the engineer say. And then, just as it seems a reply is never coming, Harmony says: “the future is fem-bot.”

Honeymoon phase

As we got to the end of the night, there was time for one last question. “Is there a honeymoon phase in a relationship?”

Tila: “The honeymoon phase is definitely a real thing. Yeah it can mean sort of different things, different phases of the relationship. It can be literally the honeymoon after our wedding, where we experience very intense intimacy and connection. Or it can also mean the beginning phase of the relationship. That’s when we have that stage of intense attraction—when you look at your partner with rose-colored glasses. We tend to ignore any potential flaws in our partner.”

Maybe that’s where we are with AI too, seeing the newest chatbots and robots as flawless, all-powerful machine gods. But as Tila says,

“That phase will inevitably fade away to make space for more critical thoughts on our partner. It’s a phase with more doubts and problems, but it’s also more real. I prefer it in the end, because it’s way richer. We experience a big range of emotions when we are with our partner. Love, but also sometimes frustration, irritation, sadness, anger, everything.”

Olivia the robot repeated Tila’s answer almost verbatim, hitting the same points with that characteristic LLM polish. Hearing these faultless answers feels magical yet uncanny. Will this honeymoon feeling last? Maybe one day soon we’ll wake up and our machine gods will be just that: machines.

Sources:

https://magazine.sebastianraschka.com/p/understanding-reasoning-llms

https://www.nature.com/articles/s41591-025-04074-y

https://arxiv.org/abs/2602.00402

www.cbs.nl/nl-nl/nieuws/2025/39/10-procent-van-de-15-plussers-sterk-eenzaam-in-2024

https://academic.oup.com/aje/article/192/8/1238/7172779?login=false

Credits:

- Writer: Jitse Amelink

- Editor: Anniek Corporaal

- Second editor: Anna Mai

- Concept: Anniek Corporaal

- Photography: Lieke Herraets

- Dutch translation: Anniek Corporaal

- German translation: Jule Hafermann