Dutch people are tall. How do we know that? Luckily, we have millions of copies of the universal Metre (registered at the International Bureau of Weights and Measures). We can easily look at the world population statistics and decide who is above or below the average. In daily life, we are less precise and still able to discern who is tall and who is not. We do it based on the experience and continuous comparison with the other humans around. Depending on whether you grew up in the Netherlands or in Thailand, the experience changes and it is likely that a Dutch would judge someone being short who appears tall to a Bangkokian

Take another example: the English words “bite” and “bait”. A British and an Australian speaker would disagree with each other about the correct pronunciation. Also, they would understand a different word when listening to a particular utterance: the sound /baɪt/ (phonetic notation) would be associated to “bite” in Newcastle and to “bait” in Melbourne. These differences are subtle, but we can run psycholinguistic experiments to question if these are just sporadic cases or hint to some general qualities of human language. Indeed, British and Australian researchers in collaboration carried an experiment about vowels with made-up words and proved that people with different English-speaking dialects associated the same sounds with different vowels. A notorious example is the vowel in “bath”, that is pronounced like “palm” in Australian English and like “trap” in British English.

Why do people with different accents hear different vowels when listening to the same recording of a sound? In general, our decisions are guided by our personal experiences.This is a useful strategy that our brain uses to integrate the missing pieces when the sensorial information is uncertain. Psychologists shed light on this intriguing aspect of humans and discovered that the general strategy humans use is to make categories: although most natural phenomena spread across a continuous spectrum, we categorize them in a relatively small set of possible perceptual experiences. We draw boundaries between these categories and we will associate an experience to a category all the times it falls in that perceptual realm. An everyday example is the rainbow: all the colours of the visible light spectrum are there, but we “see” only seven of them. This system of categorizing perceptual differences is very effective in making sense to a world with an infinite number of experiences.

Our tendency to categorize the speech sounds we listen to was scientifically reported for the first time in 1957 by Liberman under the name of Categorical Perception. This opened an infinity of other questions, such as what are these categories? And (how) do they change upon experience? Unfortunately, only a few answers have come since then. The first complication concerns understanding what a speech-sound category is. The most accredited speech-sound categorization system in linguistics is based on phonemes, but in recent years, researchers in the psychology of language proved that speakers pay more attention to another system of differences, called allophones. A phoneme is the most simple unit that allows two words to be distinguishable while an allophone is one of multiple possible spoken sounds used to pronounce a phoneme, in a particular language. For example the phoneme /t/ distinguishes top from pop, and it has two allophones in English, the aspirated [t] as in top or the unaspirated one as in stop. Luckily, our understanding of the human language and brain is still on-going and there is yet much to discover; we cannot rule-out that multiple category schemes are at play together in between our ears.

Regardless of the specific speech-sound categorization system at play in our brain, it is demonstrated that we use the categories to make decisions about what we heard. But how does this work? Kleinschmidt and Jaeger propose that humans are optimal listeners that profit from all their knowledge to understand each other. They suggest that when we deal with known speakers, like our friends or family, we will be facilitated in understanding their spoken words because we already know the sounds they will use to pronounce certain words, in a certain context. If we do not (yet) know the speaker well and have no prior experience with them that help us understand their utterances, we will soon get used to their particular accent by adapting our perceptual categories to them. McQueen and colleagues showed that the adaptation happens rapidly and that to reach it we use all the information available, like known words and facial expression. From then on, it will become easier to process the specific accent of that specific person. Figure 1 shows a simple animation to illustrate how a listener could adapt their vowel boundaries when speaking with their friends: Amelia from Newcastle, and Michael from Melbourne. The categories boundaries are there to evidence the variations in the vowel sounds across the two dialects and stress the division of a continuous acoustic space in few linguistic signs.

The tessellation has been realized using the Voronoi algorithm. The dashed lines represent the common division of vowel space in primary and secondary frequencies.

In conclusion, vowels, much like tall and short, are not good categories to draw set boundaries because they are not objective measures. Even if they are described with physical quantities, like centimeters or sound frequencies, our personal experience shapes perception to the point that our categories differ entirely from someone else’s.

Read more

– Best CT, Shaw JA, Docherty G, Evans BG, Foulkes P, Hay J, et al. From Newcastle MOUTH to Aussie ears: Australians’ perceptual assimilation and adaptation for Newcastle UK vowels. Link

– Cox, F. (1999). Vowel Change in Australian English. Phonetica, 56(1-2), 1–27. http://doi.org/10.1159/000028438

– Antonia Andreu Nadal’s Master thesis, A comparative analysis of Australian English and RP monophthongs. Link

Mitterer, Holger, Eva Reinisch, and James M. McQueen. “Allophones, Not Phonemes in Spoken-Word Recognition.” Journal of Memory and Language 98 (February 2018): 77–92. Link

– Norris, D. “Perceptual Learning in Speech.” Cognitive Psychology 47, no. 2 (September 2003): 204–38. Link

– Mitterer, Holger, Eva Reinisch, and James M. McQueen. “Allophones, Not Phonemes in Spoken-Word Recognition.” Journal of Memory and Language 98 (February 2018): 77–92. Link

– Kleinschmidt, Dave F., and T. Florian Jaeger. “Robust Speech Perception: Recognize the Familiar, Generalize to the Similar, and Adapt to the Novel.” Psychological Review 122, no. 2 (April 2015): 148–203. Link

Pictures

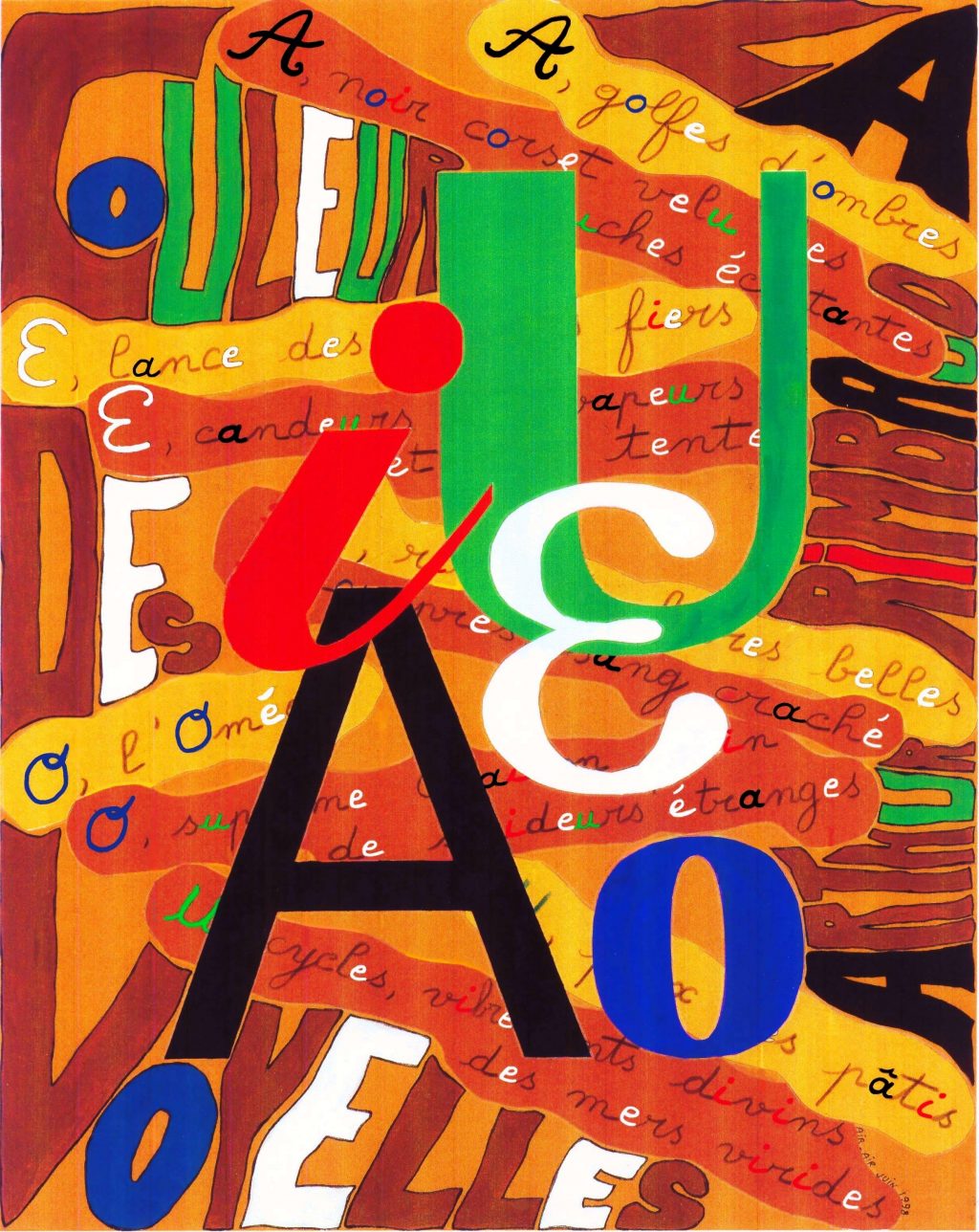

– Header picture: Illustration du poème Voyelles d’Arthur Rimbaud by Airair

– The Rainbow, Edvard Munch 1910, Munch Museum (Public Domain image from https://openartimages.com/)

– Video animation vowel space UK/Australian accent: own creation

Writer: Alessio Quaresima

Editors: Natascha Roos, Guillermo Monteiro-Melis

Dutch translation: Cielke Hendriks

German translation: Ronny Bujok

Final editing: Merel Wolf